An Everything Guide to Data Security Posture Management (DSPM)

Ravi Ithal, co-founder and CTO, Normalyze

What is DSPM?

Data Security Posture Management (DSPM) defines a new, data-first approach to data security. DSPM is based on the premise that data is your organization’s most important asset. The proliferation of data in modern organizations is rapidly increasing risks of sensitive data loss or compromise. These risks make data security the #1 problem for security stakeholders – especially those using legacy strategies for protection. DSPM charts a modern path for understanding everything that affects the security posture of your data wherever it is, including in SaaS, PaaS, public or multi-cloud, on-prem or hybrid environments. DSPM tells you where sensitive data is, who can access it, and its security posture. Following the guidelines and platform-based instrumentation of DSPM is the quickest way to keeping your organization’s data safe and secure, wherever it is.

In this guide, you will learn about capabilities and requirements for DSPM to help your organization create strategy and tactics for addressing data security posture with a systematic, comprehensive, and effective process.

How Can DSPM Help You?

The most important benefit of DSPM is accelerating your organization’s ability to continuously keep all its data safe and secure, wherever it is. Assessing and acting on data security posture is different from other types of security posture, such as issues affecting the general cloud, applications, network, devices, identity, and so forth. Unlike these, DSPM focuses like a laser beam on your data.

Specific benefits of DSPM

As part of keeping your data safe and secure, DSPM specifically will help your security, IT operations, and DevOps teams to:

-

- Discover sensitive data (both structured and unstructured) across all your environments, including forgotten databases and shadow data stores.

- Classify sensitive data and map it to regulatory frameworks for identifying areas of exposure and how much data is exposed, and tracking data lineage to understand where it came from and who had access to the data.

- Discover attack paths to sensitive data that weigh data sensitivity against identity, access, vulnerabilities, and configurations – thus, prioritizing risks based on which are most important.

- Connect with DevSecOps workflows to remediate risks, particularly as they appear early in the application development lifecycle.

- Identify abandoned data stores, which are an easy target for attackers due to their lack of oversight and which can often be decommissioned or transferred to more affordable storage repositories for cost savings.

- Secure all of your data, including including data in SaaS, PaaS, public or multi-cloud, on-prem or hybrid environments

- Secure your LLMs and AI systems to prevent unintended exposure of sensitive data.

A modern DSPM platform automates the process

Frankly, the challenge of securing data surmounts purely manual efforts to implement and maintain DSPM processes for various teams of enterprise stakeholders. If your organization desires the benefits of DSPM (and it should!), automated systems are mandatory to ensure DSPM processes are systematic, comprehensive, and effective.

The automation of DSPM entails use of a DSPM platform. A modern DSPM platform has one major focus: to quickly and accurately assess the security posture of your organization’s data and ensure rapid remediation of vulnerabilities – both for security of the data and for compliance mandates covering various types of sensitive data.

The DSPM platform does not replace existing tools used for securing networks, desktops or other discrete environments. Instead, the DSPM platform ingests contextual data, alerts and other metrics from your existing infrastructure to enhance the accuracy of the data stored within those environments. .

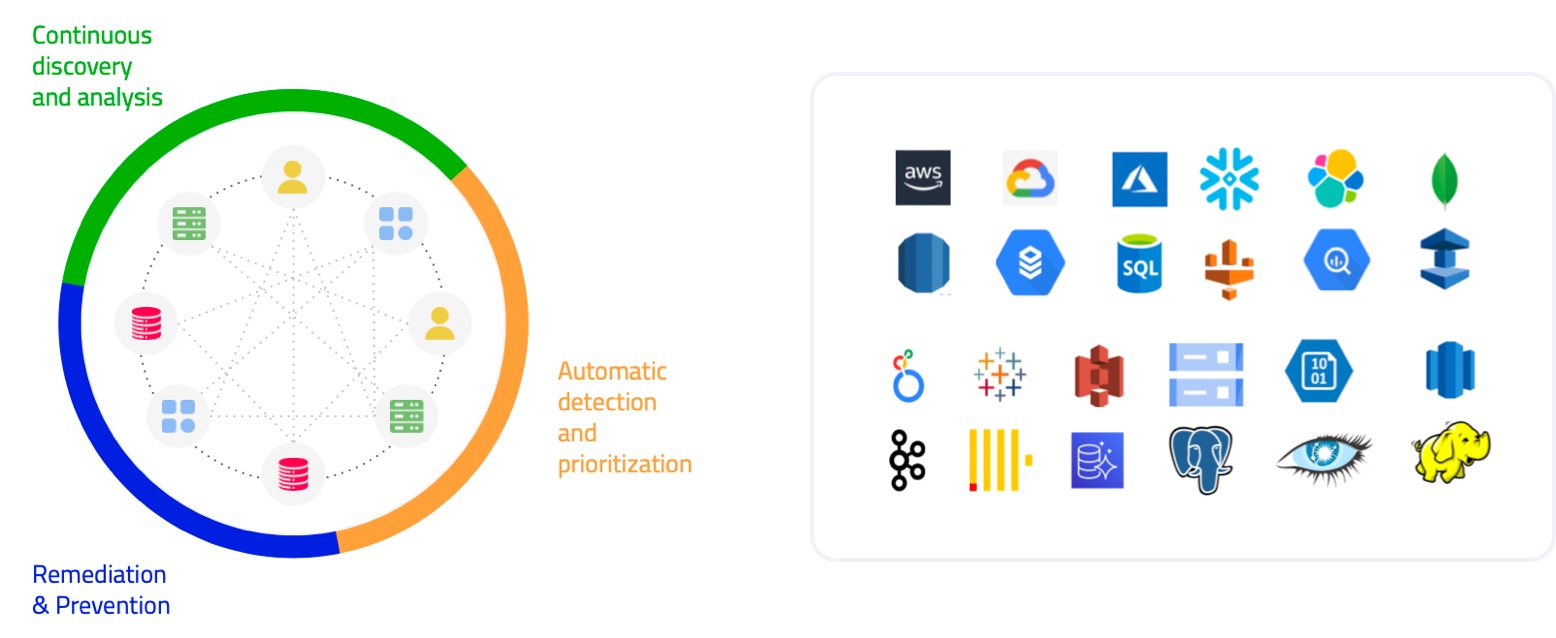

The DSPM platform also seamlessly integrates with security and operational services from your organization’s cloud service providers. These include major providers such as Amazon Web Services (AWS), Microsoft Azure (Azure), Google Cloud Platform (GCP), Snowflake and other market-leading providers. DSPM provides a critical layer on top of the security and operational tools used within the provider’s cloud, to ensure that data is classified and secured wherever it goes, across SaaS, PaaS, public or multi-cloud, on-prem or hybrid environments.

How Does DSPM Work?

One of the biggest questions for cybersecurity is, “Where is our data?” You can’t begin to secure data until you know where it is – especially critical business, customer, or regulated data. As we’ve learned in this new era of agile, your data can be almost anywhere. Getting better visibility is the first step to a process of securing data called Data Security Posture Management.

The analyst and vendor communities describe various types of posture management. They all address two general questions: What are the issues, and how can we fix them? Data Security Posture Management (DSPM) is a new prescriptive approach for securing data. As defined by Gartner in its Hype Cycle for Data Security, 2022,

Data security posture management (DSPM) provides visibility as to where sensitive data is, who has access to that data, how it has been used and what the security posture of the data store or application is. This requires a data flow analysis to determine the data sensitivity. DSPM forms the basis of a data risk assessment (DRA) to evaluate the implementation of data security governance (DSG) policies.”

– Gartner

At a high level, DSPM entails a three-step process:

-

- Discover where your data is and analyze what it consists of

- Detect which data is at risk and prioritize order of remediation by mapping user access against specific datasets, and tracking data lineage to understand where it came from and who had access to the data

- Remediate vulnerabilities and prevent their reoccurrence

DSPM discovers where your data is and analyzes what it consists of

Discovery of data location is a huge issue because of the nature of agile. In DevOps and model-driven organizations, there is a vastly larger and expanding amount of structured and unstructured data that could be located almost anywhere.

In legacy scenarios, all the data was stored on premises, which spawned the “Castle & Moat” network security model of restricting external access while allowing internally trusted users. Those were the easy days of security! The need for more flexible and agile computing has fragmented the legacy architecture and resulted in the movement of significant amounts of data to external locations operated by service providers and other entities. For security architects, practitioners, and those responsible for compliance, this titanic shift in data location, along with huge growth in data volumes, calls for a different approach to securing the data: hence Data Security Posture Management.

The DSPM approach acknowledges that agile architectures are far more complex because data environments are not monolithic. For most enterprises, data is stored in many physical and virtual places: two or more cloud service providers such as Amazon, Microsoft, or Google; private clouds; software-as-a-service providers; platform-as-a-service and data lake providers such as Snowflake and Databricks; business partners; LLMs; and, of course, on-premises servers and endpoints within your own organization.

Data isn’t just moving to more places. The velocity of data creation is soaring with a modern explosion of microservices, growing frequency of changes, acceleration of access for modeling, and constant iterations of new code by DevOps. Some of the fallout for security includes shadow data stores and abandoned databases, which lure attackers like honey draws bees.

Locating your data is just the beginning. Classification analysis is needed to help your team understand the nature of the data, and to determine levels of concern as to data requirements for protection and monitoring – especially if the data is subject to compliance mandates.

DSPM detects which data is at risk and prioritizes order of remediation

The second phase of the DSPM is detecting which data is at risk. A precursor is identifying all systems and related operations running in your organization’s environment. Detecting all infrastructure helps determine what all the access paths are to your data and which paths may require access permission changes or new controls for protection.

The issue of access rights is challenging because structured and unstructured data can be found in many types of datastores. Examples are cloud-native databases, block storage, and file storage services. For each of these, your team will need to spot access misconfigurations, inflated access privileges, dormant users, vulnerable applications, and exposed resources with access to sensitive data.

If your organization is coming up to speed on these issues, be aware that security teams must closely collaborate with data and engineering teams due to rapidly evolving application architectures, and by changes to microservices and data stores.

Access is not the only issue for risk; so is the nature of the data. Your teams will need to prioritize the data to enable ranking its importance and risk level. Is the data proprietary, regulated, or otherwise sensitive in nature? Determination of risk is a composite of vulnerability severity, nature of the data, its access paths, and condition of its resource configurations. Higher risk means remediation becomes Priority One!

DSPM remediates data risks and prevents their reoccurrence

Securing data at risk entails remediating the associated vulnerabilities discovered during the Discovery and Detection phases of DSPM. In legacy scenarios, teams are often focused on securing the classic perimeter, but in modern hybrid environments, a new, much wider scope of risks must be addressed. Remediation requires a cross-functional approach. Depending on scenarios, the team will need help with network and infrastructure operations, cloud configuration management, identity management, databases, LLMs, backup policies, DevOps, and more.

Security of data is usually governed with controls provided by a particular service provider. However, the enterprise subscriber also shares a critical role in addressing several issues mostly related to configuration management:

-

- Identify where workloads are running

- Chart relationships between the data and infrastructure and related business processes to discover exploitable paths

- Verify user and administrator account privileges to find people with over privileged access rights and roles

- Inspect all public IP addresses related to your cloud accounts for potential hijacking

Since the major cloud service providers do not provide integrated, interoperable security and configuration controls for disparate clouds, it’s on your organization to ensure that security access controls are properly configured for multi-cloud and hybrid environments.

What are the Key Capabilities of DSPM?

The DSPM platform will automate five domains of capabilities for assessing the security posture of data, detecting and remediating risks, and ensuring compliance. In general, it’s useful to look for a DSPM platform that is agentless and deploys natively in any of the major clouds (AWS, Azure, GCP, or Snowflake) and against leading SaaS applications and on-premises databases and file stores. The platform should provide 100% API access to easily integrate the use of any of your existing tools’ data required for using DSPM in your organization’s environment. Naturally, the platform should also use role-based access control to keep the management of data security posture just as secure as the sensitive data should be. All of these will minimize roadblocks and make DSPM quickly productive for your teams.

Data Discovery with DSPM

Discovery capability answers the question, “Where is my sensitive data?” DSPM should discover structured, unstructured, and semi-structured data across an extensive array of data stores from major cloud providers and SaaS platforms, plus various enterprise applications like Snowflake, Salesforce and Workday, as well as on-premises databases and file shares. DSPM should continuously monitor and discover new data stores and notify security teams on discovery of new data stores or objects that could be at risk.

Data Classification with DSPM

Classification tells you if your data is sensitive and what kind of data it is. It answers questions like “Are there shadow data stores?” First and foremost, you want DSPM classification capability to be automated and accurate – if the platform cannot do this automatically and accurately, it defeats the whole purpose of trying to do DSPM in modern hybrid environments.

Automation must address a variety of classification capabilities:

-

-

- Analyze actual content in data stores (vs. object/table/column names)

- Provide classifiers out of the box (no customer-defined rules required; this slows you down!)

- Identify regulated data (GDPR, PCI DSS, HIPAA, etc.)

- Allow user definition of classifiers for proprietary / unique data

- Have classification identify sensitive data in newly added databases/tables/columns

- Notify security team on discovery of new sensitive data

- Scan data where it sits without any data leaving your organization’s environment

- Sample data while scanning to reduce compute costs

- Detect sensitive data that combines proximity of sensitive data to increase accuracy

- Workflow to fix false positives when sensitive data is miscategorized

-

Access Governance with DSPM

Access governance ensures that only authorized users are allowed to access specific data stores or types of data. DSPM’s access governance processes will also discover related issues, such as: “Who can access what data” or “Are there excessive privileges?” A platform’s automated capabilities needs to include identification of all users with access to data stores. It should identify all roles with access to those data stores. DSPM should also identify all resources with access to those data stores. In relation to all of these, the platform also should track the level of privileges associated with each user/role/resource. Finally, DSPM must detect external users/roles with access to the data stores. All this information will inform analytics and help determine the level of risk associated with all your organization’s data stores.

Risk Detection and Remediation with DSPM

This domain is about functions of vulnerability management. Risk detection is a process of finding potential attack paths that could lead to a breach of sensitive data. Legacy security typically does this by focusing on the infrastructure supporting data (i.e., network gear, servers, endpoints, etc.). DSPM focuses on detecting vulnerabilities affecting sensitive data, and insecure users with access to sensitive data. DSPM also checks data against industry benchmarks and compliance standards such as GDPR, SOC2, and PCI DSS. The main idea is to visually map out relationships across data stores, users, and resources to guide investigation and remediation. The platform should enable building custom risk detection rules that combine sensitive data, access, risk, and configurations. It should support custom queries to detect and find potential data security risks that are unique to your organization and environment. Security teams should be provided with trigger notifications to specific assignees upon detection of risks. Related workflows should automatically trigger third-party products such as ticketing systems. To ease usability, modern graph-powered capabilities will visualize and enable queries to spot attack paths to sensitive data.

Compliance with DSPM

Modern organizations must comply with a variety of laws and regulations governing sensitive data. For example, the European Union’s General Data Protection Regulation (GDPR) aims to ensure rights of EU citizens over their personal data such as names, biometric data, official identification numbers, IP addresses, locations, and telephone numbers. A tiered system of fines for non-compliance can be up to 4% of a company’s global annual turnover or 20 million Euros (whichever is greater). Similar laws such as the Health Insurance Portability and Accountability Act (HIPAA), Gramm-Leach-Bliley Act (GLBA), the Payment Card Industry Data Security Standard (PCI DSS), and the new California Consumer Privacy Act (CCPA) all have mandates for securing specific types of sensitive data. DSPM must be able to automatically detect and classify all data within all your organization’s data stores related to any relevant laws and regulations. It should automate mappings of your data to compliance benchmarks. Stakeholders in your organization should get a coverage heatmap on data compliance gaps, such as misplaced personally identifiable information (PII), shadow data, or abandoned data stores with sensitive data. Data officers should receive a dashboard and report to track and manage data compliance by region, function, and so forth. In addition to ensuring security of regulated sensitive data, the platform should also simplify and accelerate producing documentation verifying compliance for auditors.

Securing AI with DSPM

DSPM should scan, identify and classify sensitive data being used in Large Language Models (LLMs) like Microsoft Copilot or ChatGPT, ensuring that AI-generated content does not expose valuable or sensitive information. In addition, they should help secure cloud-based AI deployments in AWS Bedrock and Azure OpenAI by detecting any sensitive data being fed into the foundational or custom models.

DSPM should offer specialized APIs for LLM security which can be used to conduct real-time sensitivity analysis of data going into and out of LLMs, while providing full governance and visibility into data usage. These APIs should easily integrate into existing customer workflows, helping keep data processing costs down and increasing security for services like Microsoft Copilot.

Using AI with DSPM

DSPM should make use of AI capabilities to accelerate processes and improve outcomes. Examples of where DSPM can leverage AI include increasing the accuracy of data classification as compared to traditional regex-based approaches, generating AI-driven remediation steps to accelerate remediation and improve communication between security and IT teams, adaptive learning based on user feedback and actions, and remediation validation that detects and acknowledges successful remediation.

Where Does DSPM Fit into the Modern Data Security Environment?

DSPM is a relatively new concept, designed to meet rapidly evolving requirements for securing data across all environments. DSPM has entered On the Rise “first position” in Gartner’s Hype Cycle for Data Security, 2022. Gartner assigns DSPM a “transformational” benefit rating.

This is an urgent problem that is encouraging rapid growth in the availability and maturation of this technology.”

– Gartner

While DSPM is a new concept, subsets of its general functionality are seen in current security tools. Unfortunately, their functionality is siloed and these standalone tools do not fulfill all five major functions of DSPM required for systematic, comprehensive, and effective security of all data.

The matrix below shows how current security tools are partially addressing the five functions of DSPM in various types of data stores. Essentially, DSPM fulfills all the squares stating “None” and may replace tools in the other squares – especially if an organization’s use cases for particular tools are minimal. Alternately, if an organization has significant investment in particular security tools (such as populating a CMDB with hundreds of thousands of assets, owners, business criticality, etc.), the DSPM platform can also ingest operational data, alerts, and other metrics from your existing infrastructure of corresponding tools for security, IT operations, and DevOps. Use case flexibility goes a long way with DSPM!

How is DSPM Being Used?

DSPM is used by a variety of organizations across different sectors to enhance their data security practices. Here are some of the primary users of DSPM:

- Enterprises with large data sets

- Cloud service users

- Regulated industries

- Technology companies

- Government agencies

- Small and medium-sized enterprises

DSPM Use Case 1: Automate data discovery and classification across all data stores

Two potential sources of overlooked sensitive data are shadow data stores and abandoned data stores. They often rest outside regular security controls, especially if they are ad hoc duplications made by data scientists and other data engineers for temporary testing and other purposes. This DSPM use case especially benefits security teams by staying in lock step with data and engineering teams to automatically discover, classify, and validate all data across all environments. The process includes inventorying structured and unstructured data across native databases, block storage, and file storage services.

DSPM Use Case 2: Prevent data exposure and minimize the attack surface

Organizations pursue a hybrid cloud strategy because it enables innovation – and this brings a constant evolution of architectures and changes to microservices and data stores. Security teams use DSPM to stay in lock step with data and engineering teams to ensure data exposure is minimized along with the associated attack surface. The DSPM platform will enable automatic identification of data at risk by continuously checking data stores – including abandoned or stale data stores, backups, and snapshots – and associated resources for misconfigurations, detecting vulnerable applications and exposed resources with access to sensitive data.

DSPM Use Case 3: Track data access permissions and enforce least privilege

Inappropriate access permissions enable the potential misuse or exposure of sensitive data, either by an insider’s accident or purposely by a bad actor. DSPM enables security teams to automatically get a simple, accurate view of access privileges for all data stores. The DSPM platform catalogs all users’ access privileges and compares these against actual usage to identify dormant users and those with excessive privileges. The resulting to-do list allows IT administrators to quickly correct excessive privileges or otherwise expunge dormant users whose accounts pose potential risk to the data.

DSPM Use Case 4: Proactively monitor for compliance with regulations

Compliance audits for data security occur for a variety of mandated laws and regulations. The DSPM platform enables governance stakeholders with the ability to stay ahead of compliance and audit requirements via continuous checks against key benchmarks and associated controls. For example, PCI DSS Requirement 3 specifies that merchants must protect stored payment account data with encryption and other controls. The DSPM platform will identify stored payment account data and whether it is encrypted. Compliance activity like this is enabled by the platform’s data catalog, access privilege intelligence, and risk detection capabilities – all of which illustrate sensitive data security posture and provide evidence for compliance audits.

DSPM Use Case 5: Enable use of AI

Generative AI and Large Language Models (LLMs) are introducing significant data security challenges: without proper data classification, there’s a real risk that these systems might unintentionally process and expose sensitive or valuable information. The challenge is compounded by the proliferation of ‘shadow AI’— technologies deployed directly by business teams without IT oversight. Such deployments can lead to inconsistent security practices and create vulnerabilities, as sensitive data might be used or accessed in ways that do not align with corporate data governance policies. The DSPM platform allows organizations to identify sensitive data before it is fed into LLMs and generative AI applications, so that proper steps can be taken to block it or mask it or otherwise prevent unintended exposure.

DSPM Use Case 6: Secure Data Lakes

With the rise of data lakes, the flexibility of PaaS environments like Snowflake has resulted in greater movement of data, more copies of data, and more users accessing data. While this flexibility is great for data analysts, it causes security teams to more easily get visibility into and control of the sensitive data in these environments. DSPM automates discovery and classification of sensitive data, detects and prioritizes risks to data, gives visibility and control over users and roles with access to data, enhances privacy and regulatory compliance, and integrates with native security and compliance capabilities.

Why do I need DSPM?

“Castle & Moat” is the classic model of cybersecurity, which restricts external access while allowing internally trusted users. While familiarity breeds comfort, security leaders should start feeling very uncomfortable with this business-as-usual approach. We’ve seen a never-ending stream of successful attacks and data breaches, so Castle & Moat is unreliable. It’s also misplaced because attackers aren’t going after your castle. Their real target is your data – and in these days of agile, that could be almost anywhere! And, what gives you the comfort the attackers are not already inside the castle?

Here are six reasons why you should consider putting data at the center of security strategy instead of relying on a legacy Castle & Moat approach.

1. CI/CD brings an explosion of deployments and new changes

The constant change in business requirements has fueled the need for automating the stages of application development. Continuous integration and continuous delivery (CI/CD) accelerates application development and makes frequent changes to a codebase. If you’re not familiar with CI/CD, think of it this way: Your organization’s application developers (your DevOps team) are shipping brand new functionality in your organization’s apps not once a month or even once a week — think 5, 10, or 15 times or more every day. To handle short deadlines like this, DevOps uses an array of tools to deliver that new code.

DevOps team members are to be congratulated for their greater agility, but quick code turns can and do add risks for data security. The risk of data leakage rises with the increased complexity and higher velocity of services and changes.

Data is especially at risk with DevOps constantly spinning up instances and links to data repositories — especially with temporary buckets or forgotten copies of data used for testing apps.

2. AI/ML fuels the need for more access to data for modeling

Compared to legacy apps, machine learning (ML) workloads require enormous amounts of both structured and unstructured data to build and train models. As data scientists experiment with models and evolve them for new business requirements, new data stores are created for testing and training. This constant movement of production data into nonproduction environments may expose it to potential exploits. Putting data at the center of your security strategy will help ensure that controls are extended to wherever data is—be it inside or outside of production environments.

3. Microservices drive more services and granular data access

The cardinal rule of football, basketball, baseball and other ball games is to keep your eye on the ball. The same lesson applies to data security: Keep your eye on the data. Doing so was easier for legacy applications, which were built with a three-tier architecture and a single data store. In that scenario, protecting application data merely required protecting that one database.

Modern app development uses multiple microservices with their own data stores that contain overlapping pieces of application data. This vastly complicates securing data, especially as new features often introduce new microservices with more data stores. The number of paths of access to these data stores also increases quadratically over time. Continuously reviewing the security posture of these multiplying data stores and access paths by hand is impossible—and is one more reason for using automation to help keep the team’s eye on the data.

4. Data proliferation brings more copies into more places

The proliferation of copies of data in different storage locations is a big issue for organizations using infrastructure as a service and infrastructure as code options. These architectures allow getting things done quickly, but “faster” often means there’s no one looking over your shoulder to apply security checks to the expanding data. Putting data at the forefront of your security policy will help provide the ability to automatically follow data to wherever it’s stored and automatically apply security controls to ensure the data is protected from unauthorized access.

5. The Security of cloud infrastructure suffers when data access is misconfigured

Access authorization is a pillar of data security. Obviously, if there’s no access authorization in place, the data is a sitting duck for attackers. But what if authorization controls are improperly implemented? Did someone simplify or remove them to facilitate easy use by DevOps? Are controls consistently applied to data wherever it is? Most cloud breaches are due to the misconfiguration of the cloud infrastructure (IaaS and PaaS), according to Gartner analysts. A data-first approach to security should ensure that access configurations for data are properly used wherever data is.

6. Privacy regulations require more control and tracking of data

Compliance is a significant driver of data security. Examples include personally identifiable data for GDPR, payment account data and sensitive authentication data for PCI DSS and personal health data for HIPAA. Noncompliance in protecting sensitive data like these can trigger substantial penalties. A data-first security policy should enable automatic discovery and classification of all protected data anywhere it is in the environment.

Data is your organization’s most valuable asset. It’s imperative for security teams to have 100% visibility into where sensitive data is to ensure it’s protected. Using a legacy castle-and-moat approach to security will fall short in modern environments. For the reasons mentioned, adopting a data-first strategy for security is important for keeping data secure. And that’s the purpose of DSPM.

How to Get Started with DSPM

Getting ready to start a DSPM platform trial is fairly simple, particularly if the provider is using an agentless model for its solution. You’ll need the following:

-

-

- Identify existing provider(s) … AWS, Azure, GCP, etc.

- Gather account details, such as Account ID, nickname, etc.)

- Authorized user(s), such as name, title, email address, and other details needed for people who will operate the DSPM trial and Proof of Concept.

-

Typically, you will enter this information into the DSPM provider’s account signup form. The trial team should consider gathering a known inventory of data stores in the organization’s infrastructure prior to the trial. The inventory will provide a benchmark to compare what you think you know about the organization’s data to what DSPM discovers is really out there. Prepare to be surprised!

Sign up for a DSPM Platform Demo

We encourage you to start your investigation of DSPM by trying a live platform in your own environment. Normalyze is a pioneering provider of data security solutions helping customers secure their data, applications, identities, and infrastructure wherever it is. With Normalyze, organizations can discover and visualize their data attack surface within minutes and get real-time visibility and control into their security posture including access, configurations, and sensitive data to secure data at scale. The Normalyze agentless and machine-learning scanning platform continuously discovers resources, sensitive data, and access paths across all environments.

Start your DSPM journey by requesting a demo of the Normalyze DSPM platform.

Ravi Ithal

Ravi has extensive background in enterprise and cloud security. Before Normalyze, Ravi was the cofounder and chief architect of Netskope, a leading provider of cloud-native solutions to businesses for data protection and defense against threats in the cloud. Prior to Netskope, Ravi was one of the founding engineers of Palo Alto Networks (NASDAQ: PANW). Prior to his time at Palo Alto Networks, Ravi held engineering roles at Juniper (NASDAQ: JNPR) and Cisco (NASDAQ: CSCO)